Diy cpu

#1

Boost Pope

Thread Starter

iTrader: (8)

Join Date: Sep 2005

Location: Chicago. (The less-murder part.)

Posts: 33,026

Total Cats: 6,592

It's not often I get real excited over something I see at hackaday. Increasingly, it's mostly just neat software tricks, teardowns of consumer electronics, and so on.

But This Guy has my respect. He's designed and built a CPU from scratch, using all discrete logic, and then constructed a fully functional computer around it.

Apparently a couple of other folks have taken on similar tasks as well:

D16.html

Mark 1 FORTH Computer

Wowsers...

But This Guy has my respect. He's designed and built a CPU from scratch, using all discrete logic, and then constructed a fully functional computer around it.

Apparently a couple of other folks have taken on similar tasks as well:

D16.html

Mark 1 FORTH Computer

Wowsers...

#4

Apparently a couple of other folks have taken on similar tasks as well:

D16.html

Mark 1 FORTH Computer

Originally Posted by D16.html

The D16/M is a general-purpose, stored-program, single-address, 16-bit digital computer using two's complement arithmetic. It manages subroutine calls and interrupts using a memory stack. . .

The D16/M is some kind of computer that does something. It does something and then does something else using something. . .

#5

Elite Member

iTrader: (1)

Join Date: Feb 2008

Location: Birmingham Alabama

Posts: 7,930

Total Cats: 45

I'd call myself certainly well above average in computer competence, but this kind of thing would be completely over my head. I'd love to be able to do this kind of thing. Or maybe one could with enough studying of how to, but I have never looked into it. Bad *** stuff though. I have too many other hobbies I enjoy more. Besides, building computers and keeping them running using tried and tested parts is a pain in the *** enough, let alone parts you made yourself (or soldered together).

#6

Boost Pope

Thread Starter

iTrader: (8)

Join Date: Sep 2005

Location: Chicago. (The less-murder part.)

Posts: 33,026

Total Cats: 6,592

Originally Posted by D16.html

The D16/M is a general-purpose, stored-program, single-address, 16-bit digital computer using two's complement arithmetic. It manages subroutine calls and interrupts using a memory stack. . .

The D16/M is some kind of computer that does something. It does something and then does something else using something. . .

stored-program = one that holds its instructions in RAM, as opposed to running programs directly out of ROM, such as an older videogame console.

single-address = the machine has a single, large address space for accessing RAM, the registers, I/O, etc, as opposed to separate address spaces (and presumably separate busses) for accessing different functions.

16-bit = the width of the bus and the size of all of the internal registers, ALUs, etc. are 16 bits.

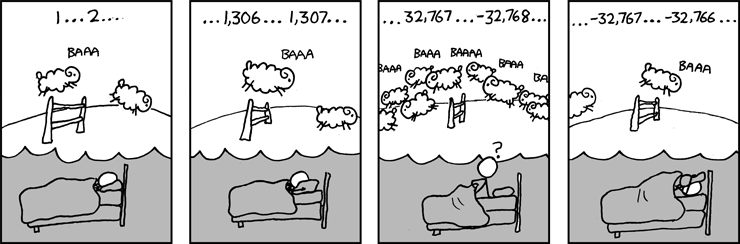

two's compliment = a system whereby negative numbers (which are normally impossible to express in binary) are denoted by using the MSB (most significant bit, or the "highest order" bit) of a data word to represent the signing of the number.

Normally, the largest value which can be represented by a binary number of N bits length is 2^N, however in two's compliment the largest value is 2^(N-1), with the benefit that you are now able to express negative numbers all the way down to -2^(N-1).

Since one bit is being "stolen" to represent signing, the largest positive or negative number that can be expressed on such a machine is 1/2 that which would be expected from the size of the word. Normally, 16 bits is sufficient to express values from 2^0 (zero) to 2^16 (65,536), however in two's compliment the range of expressible numbers is -2^15 (-32,768) to 2^15 (32,768)

subroutine call = a point in a program where it branches off to a subroutine (a little, self-contained set of instructions that gets used again and again, such as "calculate the cosine of this value" or "write data to the screen") with the intention of returning to the point in the program where it left off after finishing with the subroutine.

interrupt = basically the same as a subroutine, except the branch was triggered by some external stimulus (such as a peripheral requesting CPU time, or an external pin being triggered) rather than a pre-defined point in the code. As an example, on the MS1, the primary ignition trigger input is an interrupt. So every time the camshaft rotates 90� and a CKP pulse happens, the CPU is interrupted and it goes off to start the countdown cycle to fire the coil, and then returns to reading sensors, calculating pulsewidths, spitting data out to MegaTune, etc.

stack = a piece of memory which runs Last-In, First-Out. Imagine a barrel into which you toss data. The first piece goes to the bottom. The next piece of data goes on top of the first one, and the third piece of data on top of that. When you pop data back out of the barrel, you get the third piece first, then the second piece, and finally the first piece you put in.

In this context, it's being used to store the bookmarks that tell the machine where in the program to return to after the above two events. Say that the program enters a subroutine. It'll place a pointer in the bottom of the stack telling it where to go back to after the subroutine. While the subroutine is running, an interrupt happens. So it stops running the subroutine and drops another pointer into the stack telling it where in the subroutine it was. After it processes the interrupt, it picks up the first pointer on top of the stack which tells it to go back into the subroutine it was processing before the interrupt. When it's done with the subroutine, it picks the next pointer off the stack, which tells it to go back to the place in the main program where it was at before it branched off to run the subroutine.

So in other words, it's a pretty normal CPU.

What I find really interesting is the effect that this sort of architecture completely failed to have on personal computing in the early 1970s. It's generally acknowledged that the Altair 8080 was the first "real" personal computer, but nobody got around to inventing that until 1975 after Intel had produced the 8080 Microprocessor.

A person could have just as easily created a machine like the Altair, using this sort of architecture, at least 5 years earlier. All the core logic parts were widely available. It wouldn't have been practical to put in 64k of RAM- 2k would have been entirely adequate at that time however. This is, in fact, how the CPUs of "big" computers were built at the time (and for some years to come) but nobody bothered to think small and scale down the architecture to PC-like levels.

WTF? Why the heck didn't personal computing happen sooner?

Last edited by Joe Perez; 04-20-2009 at 10:11 AM.

Thread

Thread Starter

Forum

Replies

Last Post

Zaphod

MEGAsquirt

47

10-26-2018 11:00 PM