The AI-generated cat pictures thread

Boost Pope

iTrader: (8)

Join Date: Sep 2005

Location: Chicago. (The less-murder part.)

Posts: 33,019

Total Cats: 6,587

It is an amusing sidebar that this conversation tends to reinforce something which I have said time and time again concerning television sets (which is how we got here in the first place.) The primary reason that I continue to prefer my analog, 3-CRT HD set over a more modern LCD / Plasma set is that the analog TV simply displays the picture which is fed into it, without performing any sort of of image manipulation.

It is this same phenomenon which makes comparisons between a CRT monitor fed with VGA vs. an LCD monitor fed with VGA vs. an LCD monitor fed with DVI/HDMI invalid.

The LCD monitor has to digitize the incoming signal and format it for display in a fixed-pitch environment, even when the incoming signal is already at the monitor's native resolution and framerate. An analog VGA signal does not, by definition, have discretely identifiable pixels in the horizontal plane, only moments in time dervied from the Hsync pulse (by divding (Hsync period - overscan/retrace) by the "nominal" pixel resolution) and then sampling the red, green and blue voltages at the appropriate times to identify what is then judged to be one pixel.

CRT monitors, by their very nature, do not suffer from this dilemna, as the incoming signal is not being sampled at discrete points, but rather used to continuously drive an analog amplifier which then powers an electron gun. It doesn't matter when the video card decides to switch from one pixel to the next, as there is no possability for information to be lost or "blurred" between two pixels- the beam just continuously follows the incoming information, without attempting to interpret it in any way.

It is this process of digitization which introduces the uncertainty that it at the heart of the dilemna. In the absence of a discrete pixel-clock signal, the LCD monitor cannot know with certainty what points IN TIME it should select at which to sample the incoming analog data. The monitor must essentially make a guess as to WHEN it should sample the incoming signal, based upon its estimate of when the transitions from one pixel to the next "should" be occuring relative to the horizontal refresh pulse.

(This, incidentally, is what you are able to adjust with the on-screen display menu's "fine tune," "phase" or "pixel clock" settings. I'd suggest that you invest a few seconds into playing with this control, as it may well solve your fuzziness problem.)

The output of the video card must be extremely STABLE, and the input / conversion circuitry of the LCD monitor must be extremely PRECISE in order to accurately sample the signal. Specifically, it must take the entire sample at precisely the same time that the card is in the MIDDLE of outputting a certain pixel's worth of data.

Now, to illustrate the magnitude of the problem:

In a 640x480 display with a 60Hz refresh rate, the time elapsed from one pixel to the next is around 40ns. In a 1280x1024 display, this goes down to around 9.25ns, and by the time you get to 1600x1200, the pixel clock is around 6ns.

In order to get an accurate sample, you have to land within a window encompasing roughly the middle one-third of the pixel clock, so that means that the A/D conversion section of the LCD monitor's input needs to be accurate to within two nanoseconds in order to get a clean capture. Otherwise, you wind up capturing part of one pixel and part of another (by sampling during the transision between the two.)

You can see how an LCD monitor with a cheaply or indifferently made VGA input can easily display a horribly inferior picture as compared to one fed by a DVI/HDMI input, OR as compared to a CRT monitor fed by the same VGA input that resulted in a horrible picture on the LCD monitor.

Or, put another way, digital is not "always" better.

In Conclusion:

This is why generalizations about the suitability of the VGA system for carrying high-resolution display information is completely irrelevant in the context of an analog CRT monitor. The phenomenon which cause the resultant image to become fuzzy/blurry/etc are artifacts of the process of sampling the signal and converting it to digital data, and thus, these problems are caused by the LCD monitor itself, and not by the nature of the VGA signal. CRT monitors, lacking the need to digitize the incoming signal, are thus totally immune to such problems, and the clarity of the resultant image simply does not degrade as a function of resolution.

FOR THE TL;DR CROWD:

It's not VGA's fault that your LCD monitor displays a shitty picture. It's your monitor's fault.

Moderator

iTrader: (12)

Join Date: Nov 2008

Location: Tampa, Florida

Posts: 20,646

Total Cats: 3,009

Dropping weird bombs up in this bitch:

In October 1965, CDR Clarence W. Stoddard, Jr., Executive Officer of VA-25 "Fist of the Fleet", flying an A-1H Skyraider, NE/572 "Paper Tiger II" from Carrier Air Wing Two aboard USS Midway carried a special bomb to the North Vietnamese in commemoration of the 6-millionth pound of ordnance dropped. This bomb was unique because of the type... it was a toilet!

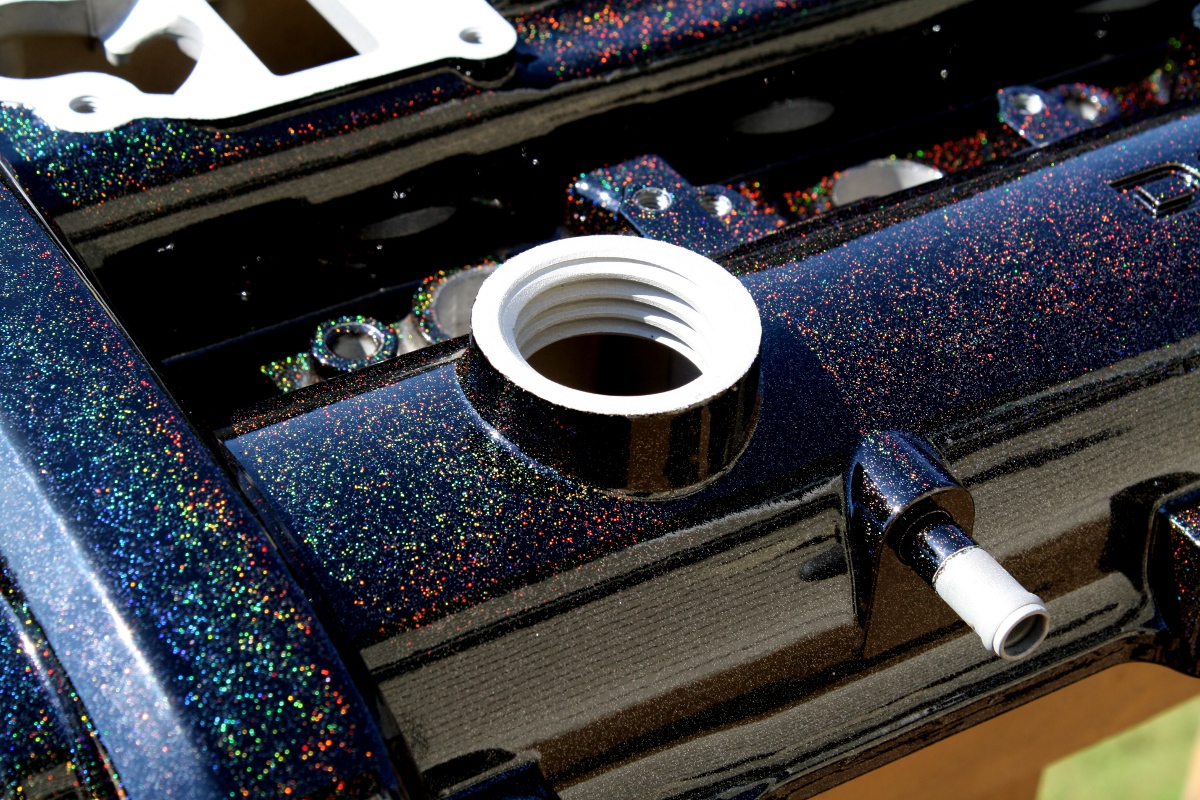

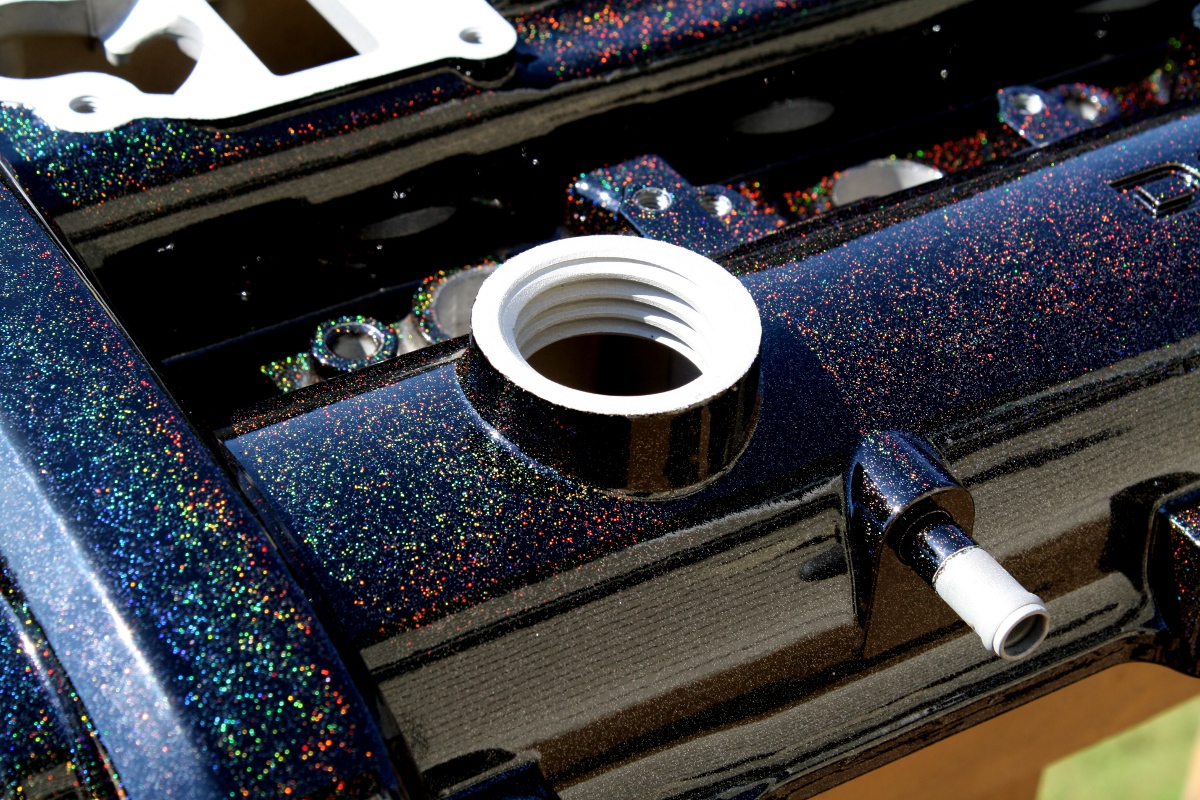

I decided to gay up my car while doing all that work on it....by powdercoating the valvecover with a nice ranbow sparkle...

Valvecover closeup by AnonymousNamelss, on Flickr

Valvecover closeup by AnonymousNamelss, on Flickr

her biceps make mine look like, like, like ****...

her biceps make mine look like, like, like ****...